Google Unveils “Private AI Compute” — Making AI Smarter and Safer

Google has taken a major step in privacy-focused artificial intelligence with the launch of Private AI Compute. This new platform is designed to provide powerful AI features while keeping user data secure and private. The announcement highlights a growing challenge in AI: delivering advanced cloud-level performance without compromising the confidentiality of personal information.

Meeting the Challenge of On-Device AI

AI is increasingly moving onto smartphones and personal devices. Running AI locally means your data stays on your device, which is great for privacy. But smaller processors, limited memory, and reduced computing power can make it hard for devices to handle complex AI tasks.

Google understands this trade-off. Basic AI functions, like checking the weather or voice commands, run easily on your device. However, more advanced tasks — such as predictive assistance, personalized recommendations, and multi-language transcription — often exceed the device’s capabilities.

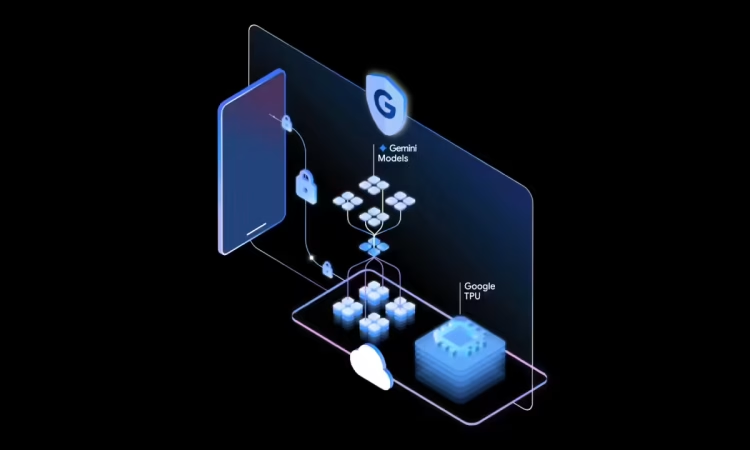

Private AI Compute bridges this gap. It allows devices to tap into cloud-scale AI models, including Google’s Gemini family, while keeping the privacy standards of local processing intact.

What is Private AI Compute?

Private AI Compute is a secure cloud-based environment that processes sensitive data without giving access to Google employees. It comes with key protections:

- Data isolation and encryption – ensures your information cannot be intercepted or seen.

- Verified device connections – only trusted devices can access the system.

- Ephemeral computation – inputs and results are discarded after each session to limit exposure.

The platform uses Google’s custom Tensor Processing Units (TPUs) and Trusted Execution Environments (TEEs) to create a sealed and verified AI processing space. Devices and servers verify each other’s integrity, ensuring computations happen in a secure and tamper-proof environment.

Why Private AI Compute Matters

As AI models become larger and more complex, they need cloud-level computing power to operate effectively. At the same time, users are increasingly concerned about privacy. Questions like “Who has access to my voice recordings?” or “Where are my transcripts stored?” are becoming more common.

Private AI Compute provides a solution: you get powerful AI features without sacrificing control of your data. It allows devices to run demanding AI tasks that previously required on-device limitations while still maintaining strong privacy assurances.

Real-World Applications

Google has shared examples of how Private AI Compute will enhance services:

- Smart suggestions: Pixel devices will see improved predictions in messaging, calendar, and photo apps. The AI can offer context-aware recommendations without sending sensitive data to the cloud.

- Enhanced transcription: Apps like Recorder will process and summarize transcriptions in more languages, powered by cloud-scale AI, all while keeping your data private.

These examples demonstrate how AI can become more personalized and proactive while remaining privacy-conscious.

The Technology Behind the Scenes

Private AI Compute relies on multiple security layers:

- Custom TPUs for high-performance AI processing.

- Trusted Execution Environments (TEEs) that protect memory and computations from unauthorized access.

- Secure network flows to obscure user identity and location.

- Rigorous supply-chain controls, including binary authorization and workload isolation, reducing the attack surface.

Even Google cannot access your raw data, offering a privacy level similar to on-device processing while leveraging cloud-scale AI power.

Considerations and Challenges

While promising, there are factors that will influence Private AI Compute’s real-world impact:

- Device availability: Initially, it targets Pixel devices and select features. Expansion to other devices is still uncertain.

- Trust and transparency: Users must rely on hardware, encryption, and verification protocols. Independent audits and public reporting will help maintain trust.

- Network dependency: Cloud-based processing requires connectivity, which could affect real-time applications.

- Regulatory compliance: Different regions have rules on cloud-based AI, data sovereignty, and encryption. Deployments must navigate these frameworks.

Despite these challenges, the platform represents a significant step toward privacy-first AI.

Implications for Users

For consumers, Private AI Compute offers a shift in how AI interacts with personal data:

- Enhanced features: Enjoy smarter recommendations, summarization, and predictive actions.

- Data protection: Sensitive information remains private, even when processed in the cloud.

- Regulatory alignment: Complies with emerging privacy regulations, making it a strong choice for privacy-conscious users.

In countries with growing smartphone adoption and evolving data laws, Private AI Compute could become a standout feature for both devices and services.

Looking Ahead

Private AI Compute presents a vision for the future: combining cloud-level AI intelligence with the privacy of on-device processing. Its potential goes beyond smartphones to tablets, laptops, and enterprise applications, offering powerful AI without compromising personal data.

With secure, verified, and temporary computation sessions, Google is paving the way for AI systems that are both capable and responsible. As adoption grows, this platform may redefine how users, developers, and businesses balance innovation with privacy.

In short: Private AI Compute could make your devices smarter, apps more context-aware, and AI services more trustworthy — all while keeping your data safe. Privacy-first AI is no longer just a concept; it’s now a reality.