How Global AI Leaders Are Collaborating to Build Ethical and Responsible Systems

As artificial intelligence (AI) continues to transform industries, economies, and daily life, one question stands out: how do we make sure AI evolves in ways that are safe, fair, and transparent? Around the world, governments, tech companies, researchers, and advocacy groups are coming together to answer that question—working to build ethical and responsible AI systems that truly serve humanity.

This growing collaboration represents a major shift in the global tech landscape. It’s not just about pushing boundaries anymore; it’s about ensuring progress happens responsibly.

The Need for Global Cooperation

AI doesn’t stop at borders. Systems designed in one country can easily influence markets, policies, and people halfway across the world. That global reach makes ethical questions—like bias, data privacy, accountability, and environmental impact—impossible to solve in isolation.

World leaders now realize that no single organization or nation can manage AI’s risks alone. What’s emerging instead is a collective effort: cross-border partnerships, shared research, and policy dialogues aimed at ensuring AI systems are not just powerful—but principled.

The Role of Global Governance Initiatives

One of the most encouraging trends in recent years is the rise of global AI governance frameworks. Organizations like UNESCO, the OECD, and the Global Partnership on AI (GPAI) are leading the charge, developing ethical guidelines and helping countries craft better AI policies.

- UNESCO’s Recommendation on the Ethics of Artificial Intelligence, backed by nearly 200 nations, is one of the broadest global efforts to date. It highlights human rights, inclusivity, and sustainability as core principles of responsible AI.

- Similarly, the OECD’s AI Principles, endorsed by over 40 countries, call for transparency, accountability, and robustness in how AI systems are built and used.

While these guidelines aren’t legally binding, they have set the foundation for shared norms across borders—helping ensure AI built in one region can be trusted and used safely in another.

Industry Leaders Join Forces

Governments may set the rules, but the tech industry is where ethical AI becomes reality. Big names like Google, Microsoft, IBM, and OpenAI are not just talking about responsibility—they’re acting on it.

A prime example is the Partnership on AI, launched in 2016. It includes more than 100 members—ranging from major tech companies to nonprofits and universities—all focused on promoting best practices for ethical AI. Their work covers crucial topics such as fairness, transparency, and the social impact of automation.

To make responsible AI easier to build, many companies are also releasing open-source tools. For instance:

- IBM’s AI Fairness 360 toolkit helps detect and reduce bias in algorithms.

- Google’s Model Card framework makes machine learning models more understandable and transparent.

These initiatives show that ethical design isn’t just a buzzword—it’s becoming an operational standard.

Academia and Research Collaboration

Universities and research institutions play a major role in grounding AI innovation in ethics. Across the world, collaborative academic programs are blending computer science with philosophy, law, and social sciences to address AI’s societal effects.

Notable examples include:

- The Center for the Governance of AI (GovAI) at the University of Oxford, which works with global think tanks to study long-term AI risks and policy.

- The Stanford Institute for Human-Centered Artificial Intelligence (HAI), which partners internationally to develop AI that improves human welfare.

These efforts are training a new generation of AI leaders—experts who see responsibility and innovation as two sides of the same coin.

Government and Regulatory Efforts

Governments worldwide are moving from discussion to action, aligning policies through joint efforts and regional frameworks.

- The European Union’s AI Act is setting a global benchmark for AI regulation, with countries across Asia and North America taking cues from its structure.

- The U.S.–EU Trade and Technology Council is helping harmonize standards on data governance and AI accountability.

- Across Asia, nations like Japan, Singapore, and South Korea are working together to standardize ethical AI frameworks.

- India, through the Global Partnership on AI, is emphasizing inclusive and socially responsible AI innovation.

Even geopolitical rivals such as the U.S. and China are beginning to discuss cooperation on AI safety—especially to prevent misuse in military or security applications.

The Growing Importance of Ethical Standards

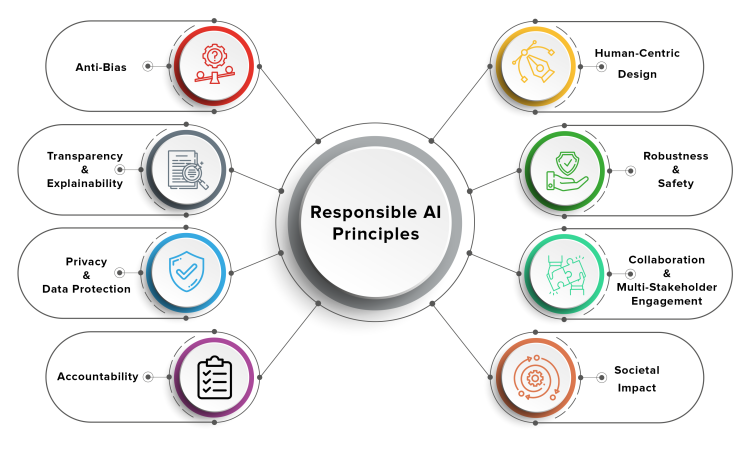

At the core of all these collaborations lies one truth: ethical AI builds trust. Without transparency and accountability, AI risks amplifying biases, violating privacy, and losing public confidence.

Ethical standards ensure AI reflects democratic values and human rights. They promote systems that are:

- Explainable – Users can understand how decisions are made.

- Auditable – Developers can check for fairness and safety.

- Secure – Models are protected from manipulation or misuse.

In fact, being ethical is now a competitive advantage. Businesses that demonstrate responsibility are earning consumer trust and investor confidence. Ethics, once seen as a constraint, has become a catalyst for innovation.

The Challenges Ahead

Despite progress, global consensus on AI ethics isn’t easy. Differences in culture, politics, and law make it tough to create uniform standards. Questions around data sovereignty, intellectual property, and algorithmic transparency often spark debate.

And while setting guidelines is important, implementing them is the real test. Many organizations still struggle to turn principles into daily practice—especially when commercial pressure favors speed over caution.

Experts are calling for stronger accountability tools, such as:

- Independent audits

- Certification systems

- Cross-border ethics review boards

These measures could ensure AI systems meet ethical and safety benchmarks before wide deployment.

A Shared Vision for the Future

The global effort to make AI responsible marks a turning point in how humanity approaches technology. The focus is shifting from winning the AI race to building the AI future together.

Sustained collaboration among governments, companies, and researchers is key to ensuring AI enhances opportunity, promotes fairness, and preserves human values.

The decisions being made today will shape how AI impacts generations to come. By choosing cooperation over competition and responsibility over recklessness, global AI leaders are proving that ethical innovation isn’t optional—it’s essential.