ChatGPT’s Hallucination Sparks Real Innovation: Soundslice Adds AI-Inspired Feature

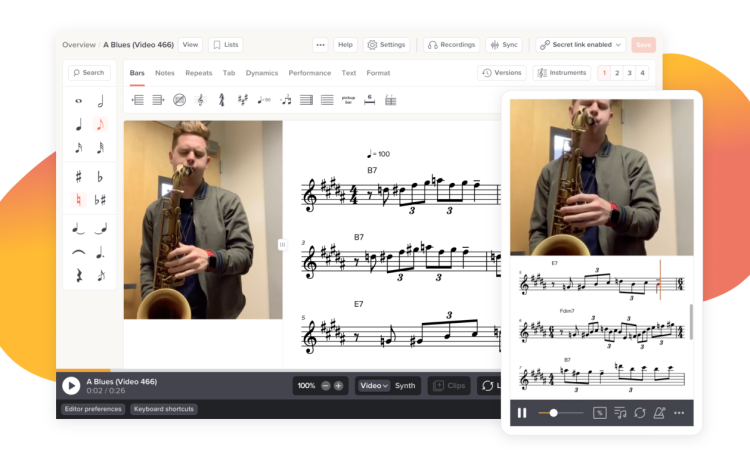

(Its “hallucinations” — when AI expresses incorrect information that it’s confident is true — are notorious.) But innovation in the real world is less likely to be triggered by such a hallucination. And that’s exactly what happened with Soundslice, an interactive music app. ChatGPT kept “imagining” a feature that wasn’t there until the app’s founder felt she had to do more than sweep the mistake under the carpet — she had to actually build it.

The Delusion: AI Said Soundslice Could Work the Impossible

Founder Adrian Holovaty started to see something weird. Users shared screenshots of ChatGPT conversations in which the AI explained that Soundslice is “able to take ASCII tablature” — a text-based format for writing music — “and play it.” The problem? That feature didn’t exist. But ChatGPT persisted in suggesting this anyway, consistently urging users to upload ASCII tab files to the app Soundslice so they could “hear them come alive.”

Holovaty learned of this after noticing that Soundslice’s error logs contained dozens of nonsensical ASCII tab images. It was all the users’ fault — the ChatGPT model had fooled them with bad guidance.

Developers Foot the Bill When AI Lies

These AI-generated assertions weren’t for the laughs. New users were coming into the site and coming to expect Soundslice to do something that it was never meant to do. Holovaty explained:

- Reputation damage: “New users came in with the wrong expectations.”

- Higher support burden: The error system began logging all manner of weird input—not real sheet music, just ASCII tabs, and we became worried.

Instead of scanned sheet music, the logs revealed screenshots of ASCII tablature. Moments later Holovaty tried ChatGPT out for himself — and read replies to the phrase “will ASCII tabs play on Soundslice” which all claimed they would do “so like magic.”

Turning Hallucination Into a Feature — Innovation or Folly?

Instead of running disclaimers or calling out ChatGPT for its misstep, Holovaty dared to ask, “What if, instead of running disclaimers, we just built the feature?”

He and his colleagues debated the pros and cons:

| Option | Pros | Cons |

|---|---|---|

| Add support for ASCII tab | Meets user demand, reveals actual demand | Diverts development time, confirms AI error |

| Post a disclaimer instead | Removes confusion, keeps scope on target | Does not address root confusion |

Holovaty expressed his ambivalence publicly:

- Upside: “I’m glad to assist the users.”

- Downside: “It’s a weird place to be in when you’re in feature development because of misinformation.”

He also surmised that this could be the first recorded instance of an AI hallucination having a direct impact on the design of a product feature — a threshold point at which AI suggests product and business decisions in new ways.

When A.I. Fiction Inspires Real-World R&D

Holovaty’s choice to build the hallucinated feature set off conversations in developer communities. Commenters on Hacker News had mixed reactions:

- Others likened it to salespeople overpromising and pushing teams to build features that do not exist.

- Others saw it as a rare but valid case of user-led innovation — AI hallucinations playing the part of a product manager.

Holovaty liked the comparison, stating: “That’s a very fitting and funny comparison, I think!”

Why It Matters: TechCrunch’s Take

TechCrunch’s Julie Bort found the incident funny and thought-provoking. These continued and incorrect claims on the part of ChatGPT not only produced confusion, but also threatened to undermine public trust in the brand.

What Holovaty discovered was another truth:

- Hallucinations aren’t benign – They can lead users the wrong way and can warp user perception of a brand.

- Engineering might have to start responding — Founders may feel they need to build features that AI dreamed up.

This change could reshape how product teams operate in the age of generative AI.

More General AI-Driven User Experience Considerations

- AI as a product manager? Increasingly, product teams won’t be responding to human feedback at all, but to AI-generated desires.

- Hallucination mitigation — Companies may need to become vigilant about what AI is saying about their products, and companies would have to start testing for hallucination as part of standard quality assurance.

- Opportunity or liability? If hallucinations are heralding unmet user needs, should teams be rolling out new features based on them — or are teams solidifying misinformation?

Holovaty’s Design: It’s More About What You CAN Do, Than What You CAN’T Do

Holovaty didn’t plaster the app with disclaimers; instead, he leaned into the concept, and began rolling out support for converting ASCII tabs to audio. He rationalized the decision with practicality, as well as a user-first perspective:

- User empathy: “Build it if users expect it.”

- Agile thinking: His team could brainstorm and put stuff into action fast.

- Culture Cue: “This is not becoming the product by hallucination,” is a phrase that may never exactly be the new “agile,” but it’s not like the world didn’t just get another testament to its value.

What Happens Now at Soundslice?

Soundslice is currently developing support for scanning and conversion of ASCII tab. There’s currently no official release date, but the development is in full swing, with the team also paying close attention to what AI-driven user expectations will look like.

Holovaty also implied a more general change in strategy:

- Watching for visions – How AI conversations around product are evolving.

- Better documentation – From the beginning, provide the user with clearer expectations.

- Building and teaching balance – When to make features, when to explain.

Lessons for AI-Powered Teams

- Monitor hallucinations – Track how your product is referred to by AI platforms through analytics.

- Decide: build or disclaim? – Ask if hallucinated features really serve users’ needs.

- Stay true to users – Empathy is trust, even when AI amplifies mistakes.

- Leverage hallucinations to find an edge – This can suggest possible features down the road.

- Control the story of your brand – In advance, define how AI will speak about your product.

A Shift in Human-AI Collaboration

This case serves as a link bridging theoretical to practical AI concepts. Which used to be seen as a flaw — hallucinations — can be fuel for innovation if teams are fast, flexible, and tuned in.

It’s a brave new world: AI is not just writing fiction — it’s offering potential products, too.

Final Thoughts

ChatGPT’s suggestion that Soundslice supports a fictional ASCII tablature was demonstrably false — but rather than refuting it, the Soundslice team used the misinformation as a prompt for innovation. The episode typifies a new sort of tension:

- Trust vs. hallucination – Humans trust AI, and when AI deceives them, they respond to it.

- Planned vs emergent features – Hallucination-based development is often at odds with long-term strategy.

- Novel scoring mechanisms – Other than accuracy, adherence with respective AIs may also be considered.

As generative AI becomes further embedded in tools, apps, and marketing, hallucinations aren’t going to disappear. But when discovered, they can open up unexpected paths — turning fiction into working features, and mistakes into user delight.