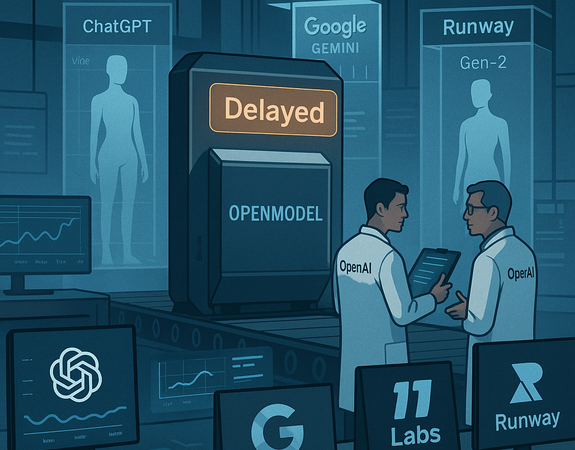

OpenAI Delays Release of Open Model—Again, Sparking Frustration and Debate

OpenAI, one of the world’s most prominent artificial intelligence research labs, has once again delayed the release of its much-anticipated open-source AI model. The announcement, made earlier this week, marks the second major postponement in the rollout of a model that the company previously hailed as a step toward democratizing advanced AI capabilities. The delay has reignited debates over transparency, ethics, and competition in the rapidly evolving AI sector.

A Promised Open Model, Still Behind Closed Doors

In early 2024, OpenAI had promised that it would release a powerful new model to the open-source community by the end of the year. The model, intended to compete with Meta’s LLaMA and Mistral’s open releases, was expected to be a leaner version of GPT-4—stripped of proprietary safety layers but retaining much of the core intelligence and functionality.

However, the deadline came and went with no release. Instead, OpenAI cited:

- Ongoing safety evaluations

- A need to develop stronger mitigation tools

As of July 2025, the company has again delayed the launch, stating that:

“New findings in misuse risk” have compelled them to revisit the scope and conditions under which the model might be released—if at all.

The Safety Argument

OpenAI’s official statement emphasized concerns around:

- Misinformation

- Autonomous cyberattacks

- Large-scale manipulation

“As our research progresses, we are gaining a deeper understanding of the dual-use nature of powerful AI models,” said Mira Murati, OpenAI’s Chief Technology Officer.

“While we remain committed to our mission of ensuring that artificial general intelligence benefits all of humanity, we must act cautiously and responsibly.”

The company further pointed to recent misuse of other open-source models for:

- Deepfake production

- Phishing campaigns

- Automated political influence operations

By withholding the model, OpenAI argues it is minimizing potential real-world harms.

This cautionary stance aligns with OpenAI’s broader safety strategy, which includes:

- Staged deployment

- Monitoring partnerships

- Internal red-teaming efforts

However, critics argue that this narrative is increasingly being used as a convenient shield for strategic advantage.

A Growing Rift in the Open-Source AI Movement

The repeated delays have caused significant friction between OpenAI and the open-source AI community. Developers, researchers, and startups had hoped to leverage the open model to build:

- Tools

- Applications

- Localized AI systems tailored to specific regions and needs

“This is starting to feel like a bait-and-switch,” said Dr. Leah Carpenter, a machine learning researcher and advocate of open-source AI.

“OpenAI built its early models with the help of public research and community trust. Now that they’ve achieved dominance, they’re pulling up the ladder.”

Other voices echo this concern, arguing that companies like Meta, Mistral, and Cohere are proving that openness and safety can coexist through:

- Licensing restrictions

- Responsible partnerships

“You don’t have to give the model to everyone,” said Anuj Patel, a software engineer at an AI startup.

“But you can work with researchers and developers under structured terms. Just keeping it locked away indefinitely hurts innovation.”

Commercial Interests at Play?

Critics also question whether the delay is motivated more by commercial strategy than safety. OpenAI’s current flagship model, GPT-4.5 Turbo, powers several paid services, including:

- Microsoft Copilot

- ChatGPT Plus

- Enterprise APIs

Releasing a powerful open model could undercut these revenue streams, especially if competitors integrate it into low-cost alternatives.

“There’s a conflict of interest here,” said Greg Stevens, an AI policy analyst.

“OpenAI is operating in a market-driven environment while trying to maintain the moral high ground. Those goals are increasingly hard to reconcile.”

Adding to the tension is OpenAI’s original mission, which focused on:

- Openness

- Long-term safety

- Avoiding competitive pressures

However, since shifting to a capped-profit model in 2019 and partnering closely with Microsoft, critics argue that OpenAI’s operational philosophy has changed.

The Global Backdrop

This delay comes amid global debates over AI governance:

- The European Union’s AI Act mandates model transparency and risk-based classification.

- In the U.S., the White House’s AI executive order stresses responsible deployment and public accountability.

Several NGOs and watchdog groups have urged OpenAI to share:

- Details on the model’s capabilities

- Internal risk assessments

- Testing processes

“We need clear, auditable standards for when and how powerful models are released,” said Sofia Larkin, Director of the AI Governance Coalition.

“Right now, it feels like every lab is making up its own rules as it goes.”

What’s Next?

Despite mounting criticism, OpenAI has not ruled out the eventual release of its open model. The company says it is working with:

- Ethicists

- Policy leaders

- Red-team experts

A newly formed internal task force is reportedly evaluating:

- Limited access scenarios

- Use in academic research

- Small-scale pilots

Meanwhile, competitors are advancing:

- Meta is expected to release the next iteration of LLaMA by late 2025.

- Hugging Face and other open-source groups are supporting independently trained large language models.

This evolution is giving rise to a fragmented but thriving open-source ecosystem—one that may soon no longer rely on OpenAI’s participation.

Conclusion: A Tipping Point?

OpenAI’s repeated delays in releasing its open model may mark a turning point in the AI landscape. What began as a collaborative race for innovation is now becoming a story of:

- Strategic opacity

- Commercial protectionism

- Ethical complexity

As the world seeks to balance innovation with responsibility, OpenAI’s next move will be pivotal. It could either:

- Help shape industry-wide norms, or

- Further fracture the community it once helped unite

Until then, developers, researchers, and the broader public are left waiting—once again—for a promise that remains just out of reach.